フジ子・ヘミング(猫十態 ダンゴ君)真作保証 木版画 Acre

(税込) 送料込み

商品の説明

商品説明

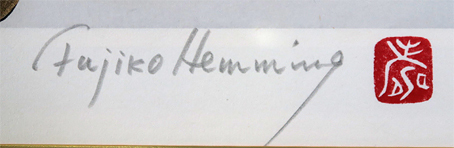

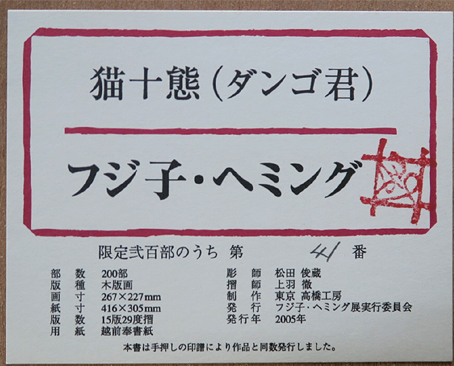

制作技法 木版画 額装サイズ 縦490mm×横420mm 画面サイズ 縦267mm×横227mm 作家サイン 直筆本人サイン有り 限定部数 200部 状 態 良好 備 考 * 42000円フジ子・ヘミング(猫十態 ダンゴ君)真作保証 木版画 Acreホビー、カルチャー美術品

*額は「新品額」との記載以外は経年によるスレ・キズ等がある場合があります。

弊社の出品作品は全て「真性」を保証しています。万が一贋作と判明した場合は、

弊社がご返品・ご返金等の対応をさせて頂きます。

「フジ子・ヘミング」他豊富な品揃えと低価格で好評を頂いている弊社HPも是非ご覧下さい。

https://www.artcreation.co.jp/fujikohemming.html

商品の情報

カテゴリー

配送料の負担

送料込み(出品者負担)配送の方法

ゆうゆうメルカリ便発送元の地域

宮城県発送までの日数

1~2日で発送メルカリ安心への取り組み

お金は事務局に支払われ、評価後に振り込まれます

出品者

スピード発送

この出品者は平均24時間以内に発送しています